ChatGPT in Education: Risks of the AI powered technology

Will AI powered technology ChaptGPT kill education as we know it? Killing education is probably not something that is likely to happen but disruption that we are witnessing recently will for sure disrupt education for good.

ChatGPT, power by AI technology, will disrupt education as we know it today and will reshape the approach used by educational institutions when it comes educational materials and the interactivity with students.

We are not focusing in this article on the positive use cases behing the ChatGPT, as there are for sure many of them.

Instead, the underlying risks for educational instiutions are numerous:

- Content production

- Plagiarism issue

- Ethical issues

These three issues are probably at the core of current and future discussions on the risks behind using AI powered technology in education.

Content production

We tried ChatGPT to better understand what it can do but also to understand if there are some limitations.

We were blown away by the results it can produce – Personally, I have never seen something similar.

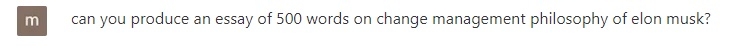

We have asked ChatGPT to produce a 500 words essay.

ChatGPT replied:

This looks pretty good as an initial outcome and at first sight, it would be quite difficult to understand if this was done by a machine or by a human.

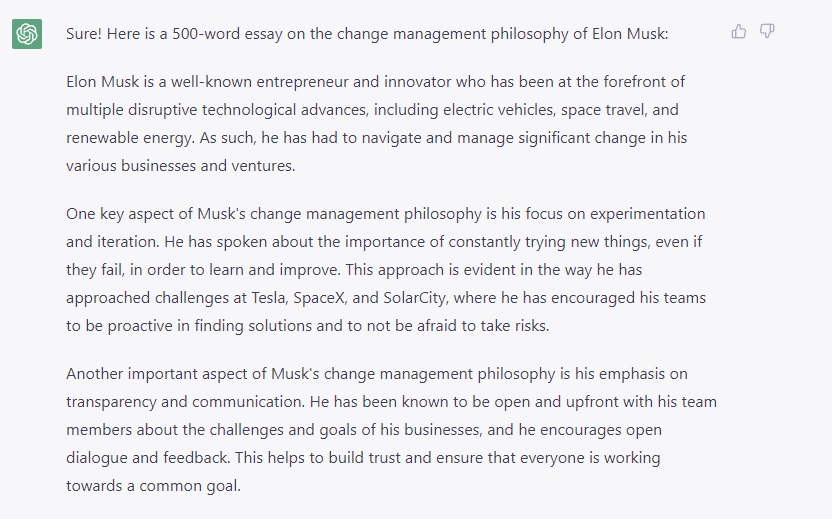

Next thing we wanted to do is to ask ChatGPT to make the reply a bit more academic with a clearer introduction, discussion and conclusion sections.

Here is the result. Quite impressive.

Now, we have submitted the created essay to a well known plagiarism software – and only 2% of plagiarism detected!

There are many ethical questions that could be raised. Also, intellectual property topic or copyright issue could also be brought up. Ultimately, what could be the student’s involment and intelectual contribution in this approach? That is the right question.

We asked ChatGPT: Is is ethical to use the content that you have created?

This was the answer: As an AI language model, I am not able to hold intellectual property rights or have personal ethical views. As a language model, my primary function is to assist users in generating human-like text based on the prompts and information provided to me. I do not have the ability to own or claim ownership of the content that I generate, and it is up to the user to determine how to use the content.

It is important to consider the ethical implications of using any content, including text generated by a language model. If you plan to use the content for any purpose, it is always a good idea to ensure that you have the necessary permissions and that you are not infringing on any copyright or intellectual property rights. It is also important to ensure that the use of the content aligns with your own personal and professional ethical standards.

Currently, I see some important questions that will have to be addressed urgently by all educational institutions when it comes to finding the right approach.

Some are suggesting that the right direction is to make assignments more specific and context based so that AI chatbot cannot easily address them. Others are proposing to switch to a more project-based assignments where a simple question cannot be approach with a simple answer.

I believe that the right direction is to make the assignment more complex in terms of the context, assumptions and basic foundations, would require students to take a way more complex analytical approach that will result in delivering final assignment that cannot be tackled by an AI fuelled robot.

Plagiarism issue

Plagiarism issue is one of the trickiest aspects of student’s assignment. Not only, an educational institution does not want students to plagiarize but most importantly, they want them to contribute with their assignments or submissions to what the expected learning outcomes from the course are.

Ethical concerns

Another topic related to ethical challenges. How ethical it is to use someone else or something else to help student in writing the content. This question demands some further thoughts but generally speaking, it is not simple to avoid some unethical situations as we live in the big data world and no one (and I mean it), is really doing something from scratch. If you ask any programmer on the planet, how exactly they start a new programming project, each one of them will confirm that they do not do it from scratch but mostly rely on some open source foundations.

Conclusion

AI powered technologies are now part of our realities, probably much faster than what we could have ever imagined.

When one of the OpenAI/ChatGPT project stakeholders was asked three years ago how they think that the revenue will be generated from the OpenAI project, he replied that they will first build the technology and then ask the technology itself “Tell us now, how can we make some revenue from you”.

Recommendations

Here are some tips educators can try to implement to tackle some of the above issues:

- Try to pick up more complex and context-specific assignments

- Avoid assignments that are too generic or easily available or open (i.e. reference to a specific party, firm, stakeholder group, international organisation, or state government should be avoided)

- Assignments that have questions about topics, trends, issues, etc. that have been extensively discussed or analysed should be avoided

- Assignments should be more practical in nature and draw more heavily on the actual learnings provided in class (e.g., ask students to provide practical case based on the specific assignment analyzed in the classroom).

- AI is not perfect and can sometimes make up things – try to check for facts, events or analysis provided

insightful